Home

Creating HVM and PV domUs on Xen 4.3 / Debian 7

Over the course of developing the OpenPplSoft runtime, I’ve provisioned several servers in a Virtual Private Cloud (VPC) on AWS. Doing so afforded me a high level of flexibility, ease of snapshotting EBS volumes, a fast connection to the Internet, and the ability to easily set up private subnets. However, I’ve reached a point where compilation times for OPT are too lengthy on EC2-backed servers, mainly due to the fact that EBS volumes are network-attached storage devices. I could adjust my setup on AWS, but I wouldn’t be able to justify the cost increase with my limited budget.

I did some research and settled on running a local Xen machine, which happens to be the same hypervisor employed by AWS. I had a server sitting around running Windows Server 2008, but I hadn’t logged in to it for awhile, so I decided to use that to host Xen. I swapped out two 160GB WD hard drives for two 240GB Intel SSDs and planned out my goals:

- Ability to run three domUs at once (a “domU” is essentially a virtual machine in Xen; dom0 is the operating system running Xen itself):

- Windows Server 2008 R2 Datacenter Edition, for running PeopleSoft app and web servers, in addition to being a PeopleSoft development workstation.

- Oracle Linux 6, for running Oracle 11g, which in turn hosts a PeopleSoft database.

- Debian 7, for compiling and running OpenPplSoft.

- All three domUs must have static IPs on a private, domU-only network (requires libvirt, which I describe in a later post)

- All three domUs must be able to connect to the Internet.

- dom0 must have a static IP.

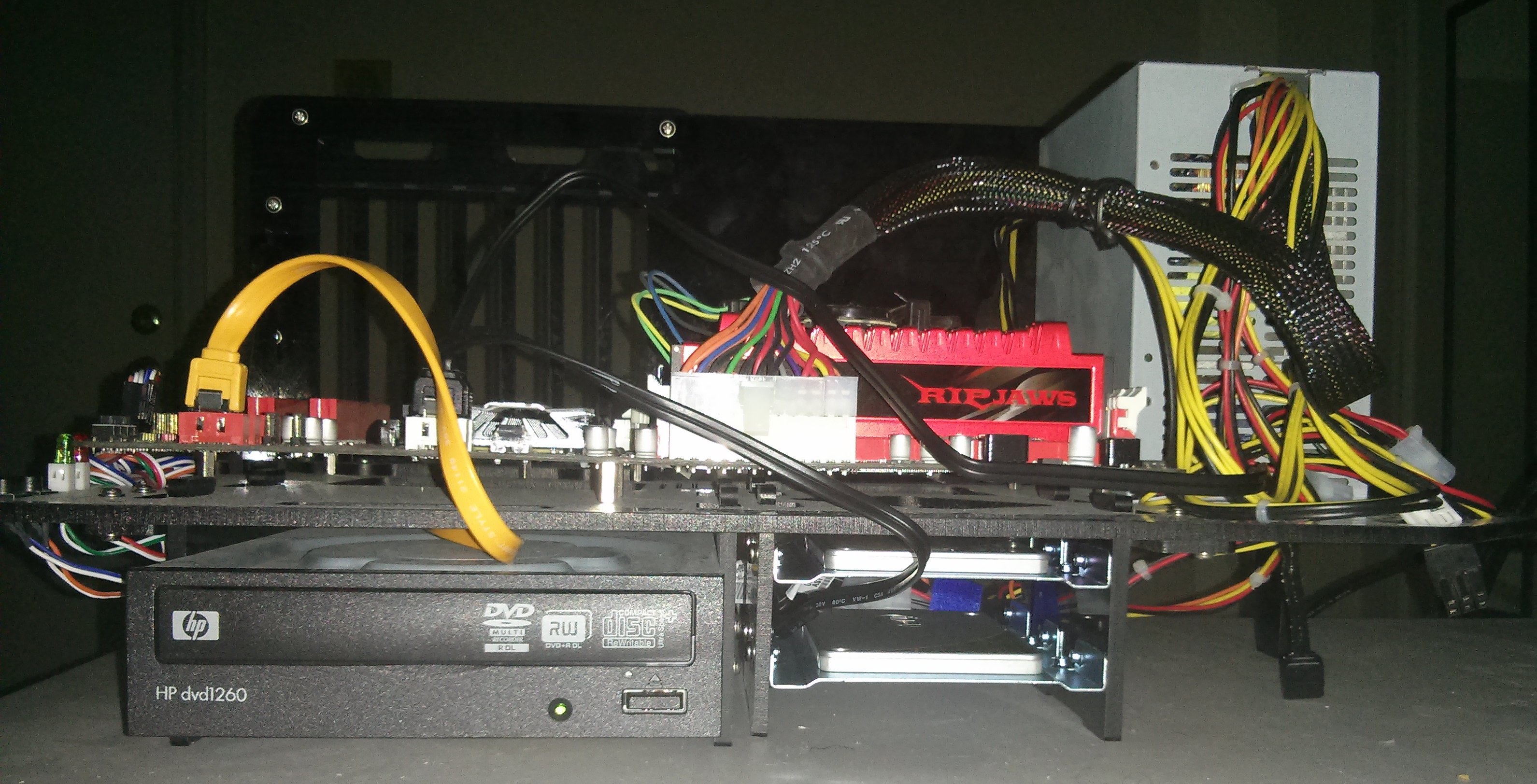

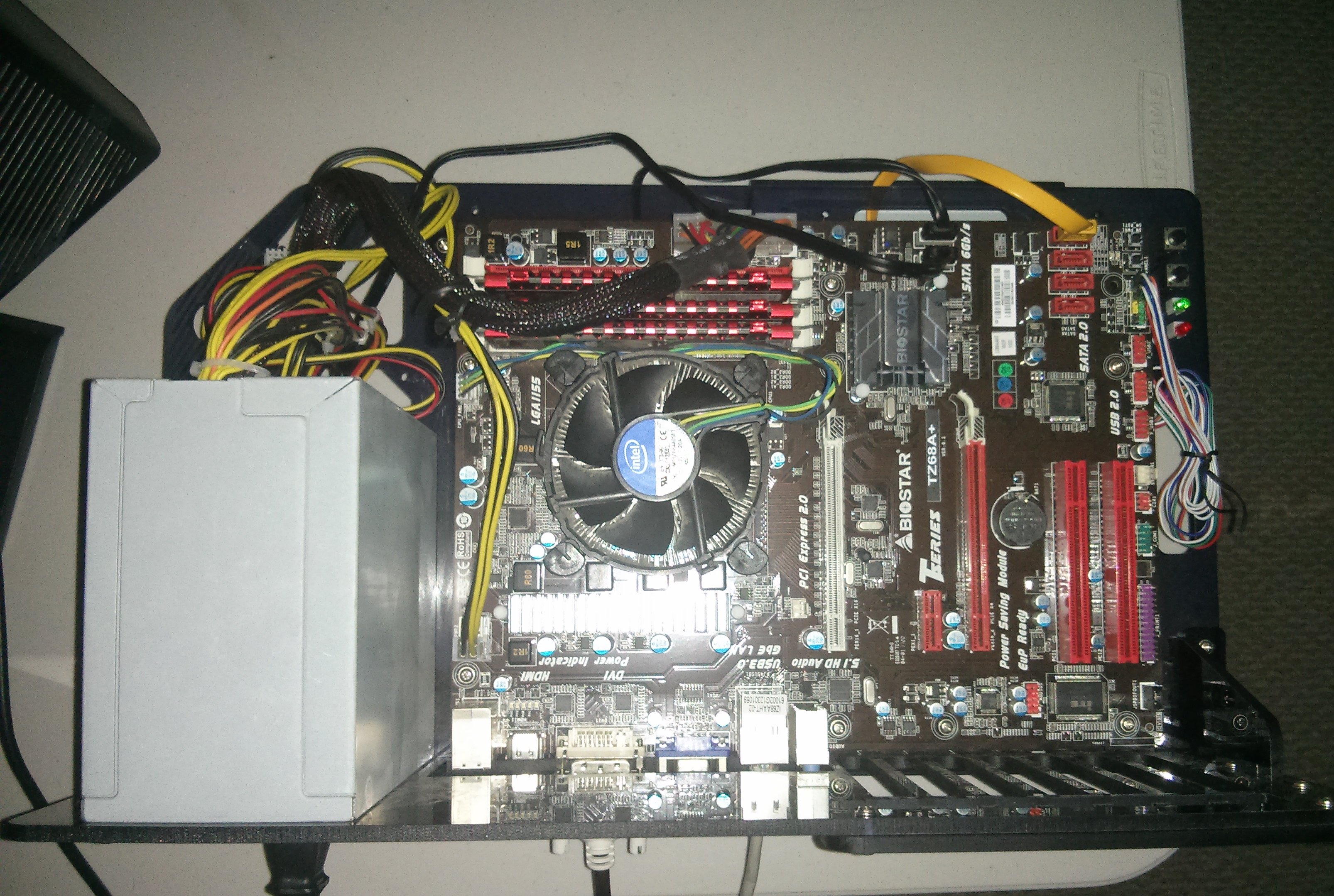

The Machine Running dom0

I have a desktop server that I’ve upgraded piecemeal over the years; the only original component is the PSU. The CPU is an Intel Core i5, there are 3 x 4 GB DRAM chips on the motherboard, and I swapped in the two SSDs to ensure that this whole effort wouldn’t be for naught.

A Note about XenServer from Citrix

I considered using the XenServer solution from Citrix and played around with it for a little while. The upside is that XenCenter, the application used to manage XenServer instances, is a very comprehensive and well-done application. The downside is that XenServer runs on CentOS, a distribution I have little familiarity with. I decided that when I inevitably needed to change operating system settings on dom0, it would be better to be running an OS I have some experience with. I did manage to get a Windows Server VM running on XenServer, but when it came time to customize the networking settings, I had no success. That’s when I decided to drop XenServer and just work with a Xen-enabled Linux kernel on Debian.

A Note about Chipset Firmware Settings

Thankfully, the motherboard on my dom0 machine uses plain BIOS rather than the horror that is UEFI; applying the procedure in this guide to a UEFI-equipped system may require additional steps. Regardless, you should review your firmware settings for anything that should be changed prior to installing Xen. Although firmware control panels vary greatly, here are the changes I made to mine prior to installing Xen:

- Advanced -> CPU Configuration -> set Intel Virtualization Technology to Enabled.

- Advanced -> SATA Configuration -> changed SATA Mode from IDE to AHCI Mode (unrelated to virtualization but better to do this now rather than later).

- Advanced -> CPU Configuration -> set Power Technology to Disabled (leaving this enabled may or may not result in issues when running Xen; disabling to be safe).

- Chipset -> North Bridge -> set VT-D to Enabled (this is virtualization-related).

- Advanced -> USB Configuration -> set EHCI Hand-off to Enabled.

Install Debian 7 as Dom0

- You’ll need to obtain a copy of the Debian Stable NetInstall x64 disk. I used UNetbootin on a spare Debian machine to create a bootable USB drive containing the contents of the NetInstall disk.

- You may also need to inject non-free firmware into the install disk if your target machine has hardware requiring non-free drivers. My target machine has a RealTek Ethernet adapter which requires this firmware. If you don’t know whether your target machine needs non-free firmware, skip this step and continue with the setup process. If the installer warns you about proprietary hardware, come back to this step and try again.

- Open the non-free firmware repository in a browser window.

- Navigate to the wheezy directory, then the current directory.

- Download firmware.tar.gz to your filesystem.

- Unzip the package into a new directory called firmware and move this directory to the directory where you’ve mounted the USB drive.

- You may also need to inject non-free firmware into the install disk if your target machine has hardware requiring non-free drivers. My target machine has a RealTek Ethernet adapter which requires this firmware. If you don’t know whether your target machine needs non-free firmware, skip this step and continue with the setup process. If the installer warns you about proprietary hardware, come back to this step and try again.

- Unmount and remove the USB drive from the spare machine.

- Boot the target machine from the USB drive (you may need to adjust boot priorities in your BIOS settings).

- The Debian installer should appear onscreen. Here are the selections I made:

- Set hostname to

entxen1. - Left domain name blank.

- Selected the

ftp.us.debian.orgarchive mirror. - Left HTTP proxy blank.

- Left root password blank to disable root account.

- Entered

Matt Quinnfor full name. - Entered

mquinnas username. - Partioned disks.

- Selected

Manual. - Created empty partition table on the hard drive.

- Created a 4.0GB primary partition at the beginning of the disk for use as the Debian (dom0) partition. Used ext4 filesystem, mounted as /, labeled partition as

DebianDom0, leaving bootable flag set to off. - Created a 2.0GB primary partition at the beginning of the free space for use as dom0 swap. File system is swap.

- Created a 243.0GB primary partition at the beginning of the free space for use as the LVM to contain domU disks. File system is of type

physical volume for LVM. - Selected “Configure the Logical Volume Manager”.

- Pressed “Yes”.

- Created a volume group called “vg0,” selected /dev/sdb3 (the 243.0GB partition) as the sole device for the volume group.

- Not creating any logical volumes at this time, so pressed “Finish.”

- Selected “Finish partitioning and write changes to disk.”

- Selected

- Installation process started.

- When prompted to install software components, I left “SSH server” and “standard system utilities” selected, leaving all others deselected.

- Pressed “No” when asked to install GRUB to MBR (only press “Yes” if the HD is at /dev/sda; if you are using a USB install disk like I am, the install disk is almost certainly at /dev/sda).

- Entered

/dev/sdbto install GRUB to the hard disk and not the install disk.

- Entered

- Installation completed.

- Set hostname to

Switch from the Stable Channel to the Testing Channel

- I want to work with the latest available Linux kernel and the latest available Xen packages on this machine, so I’m going to switch to the testing package repositories. If you continue on the stable channel, you will almost certainly run into issues, because I’ve found that the Linux kernel in stable typically lags behind the one in testing by several minor version iterations (roughly 6 to 8 months).

- Boot the target machine and log in to Debian.

- First update all packages from the stable repositories:

sudo apt-get updatesudo apt-get upgradesudo apt-get dist-upgrade

- Then switch to the testing channel:

sudo nano /etc/apt/sources.list- Replace all occurrences of “wheezy” with “testing”.

sudo apt-get cleansudo apt-get updatesudo apt-get upgradesudo apt-get dist-upgradesudo apt-get autoremove

sudo reboot

Install Xen

- Log into Debian on the target machine.

sudo apt-get install xen-linux-systemegrep '(vmx|svm)' /proc/cpuinfo- verify that all your CPU cores are listed. If nothing comes back, your hardware does not support x86 virtualization extensions, and Xen will not operate correctly.- Prioritise Xen at boot time over non-Xen kernels:

sudo dpkg-divert --divert /etc/grub.d/08_linux_xen --rename /etc/grub.d/20_linux_xensudo update-grubsudo reboot

- Verify that the target machine now boots the Xen-aware kernel by default at startup.

Configure Xen

- Log into Xen on the target machine.

- It’s preferrable to give Xen a static amount of RAM rather than allow it to “balloon” based on the availability of domUs running on the system. I set up a 2GB swapspace, so I’ll give Xen 2GB of RAM:

sudo nano /etc/default/grub- Add the line:

GRUB_CMDLINE_XEN="dom0_mem=2048M"

- Add the line:

sudo update-grubsudo nano /etc/xen/xend-config.sxp- Change

(dom0-min-mem 196)to(dom0-min-mem 2048)and make sure the line is uncommented. - Change

(enable-dom0-ballooning yes)to(enable-dom0-ballooning no)and make sure the line is uncommented. - Change

(vnc-listen '127.0.0.1')to(vnc-listen '0.0.0.0')and make sure it’s uncommented.

- Change

- It’s possible to pin a CPU for dom0 use only, but I don’t anticipate that the domUs will ever crowd out dom0; CPU activity won’t ever be that high. I’m going to let Xen use any of the 4 cores available on my target machine. If you want to pin one or more CPUs for dom0 use only, you’ll need to modify

/etc/default/gruband/etc/xen/xend-config.sxp. - By default, Xen will suspend any running guests to disk when it shuts down. I wanted to disable this feature and just have Xen shutdown the guests:

sudo nano /etc/default/xendomains- Change the value of

XENDOMAINS_SAVEfrom/var/lib/xen/saveto""(two double quotes). - Change the value of

XENDOMAINS_RESTOREfromtruetofalse.

- Change the value of

sudo reboot

Set up a Network Bridge for Use By Xen domUs

- If you’re just looking for a way to give your virtual machines an IP address on your local network, and don’t need a separate network for inter-VM communication, this section details all the networking setup you need to do. In a later post, I do away with the bridge created here in favor of a bridge created with libvirt, which allows for a separate, VM-only network.

sudo nano /etc/network/interfaces- Modify this file such that it looks like the one below.

- Write the file to disk and reboot.

- Run

sudo brctl showto verify that xenbr0 is listed. - Run

sudo ip addr showto verify that both eth0 and xenbr0 have been assigned the same IP. - Check to make sure dom0 is still able to connect to the Internet (

wget www.spacex.com).

/etc/network/interfaces

auto lo

iface lo inet loopback

auto eth0 # <--- note the change from allow-hotplug to auto

iface eth0 inet manual # <--- note the change from dhcp to manual

auto xenbr0

iface xenbr0 inet dhcp

bridge_ports eth0

bridge_stp yes # <--- STP can be turned off if you're especially concerned about performance

Install the Xen Toolstack

- Xen is administered via a toolstack; install it by running

sudo apt-get install xen-tools - Note that for each domU you create, Xen applies the defaults in

/etc/xen-tools/xen-tools.conffor any setting that you don’t explicitly override in the domU-specific configuration file you write (more on that in the next section). - At this point, I attempted to create a test VM using

xm createbut received an error message: “Error:NoneTypeobject has no attributerfind.” I found out that this occurred due to the switch from thexmto thexltoolstack in Xen 4.3 (see this post for more details). To resolve this error, I did the following:sudo nano /etc/default/xen- set

TOOLSTACKtoxl(that is a letter “l”, not a numeral 1).

- set

sudo reboot- Log in and verify that you can run

sudo xlsuccessfully. - Check that a process called

xendis not running (ps -e | grep xend). It should not be running when thexltoolstack is used. Some people have modified the/etc/init.d/xenscript to disable the call toxend_starton startup, but I personally did not need to do that, asxenddid not start after I switched over to thexltoolstack.

- If you plan to run a Windows domU, you will need to install QEMU; as of Xen 4.3, QEMU no longer comes with Xen. You must install it separately using

sudo apt-get install qemu, then reboot. - Note: review the output of

man xl.cfgfor details on how to write Xen .cfg files, which are used to describe domUs that Xen should run.

Creating a Windows Server 2008 R2 domU

- If you don’t plan on running a Windows VM, you can skip this section. The steps here apply to any instance of Windows running as a domU, with the overarching point being that Xen uses the virtualization capabilities in your hardware to run Windows in an approach known as HVM. Contrast this with paravirtualization (PV), where the guest knows about the hypervisor and can delegate certain tasks to be run by dom0.

- Note: It is possible to install PV drivers on a Windows domU, but I had no success with this. It wasn’t critical for me to get them working since I won’t be running my Windows Server domU 24/7, so I didn’t try very hard to install them. More on this later.

- On a spare machine, mount a USB flash drive (I mounted mine at

/mnt/verbatim) and a CD ROM drive (mine was/dev/sr0) containing the installation CD or DVD for the version of Windows you want to run as a virtual machine.- The install disk needs to be converted to an ISO in order for Xen to present it to the virtual machine as a CD drive.

- Create an image of the disc using

sudo dd if=/dev/sr0 of=/mnt/verbatim/WinServer2008R2.iso bs=1M. - Unmount the USB drive from the spare machine and mount it on dom0 (I mounted mine at

/mnt/verbatim).

- Log in to dom0.

- Create a hard drive for the Windows VM you’re about to create. This is best done by creating a logical volume within a logical volume group on an LVM partition, which makes it easy to expand and shrink volumes later on. You can have Xen use a file on the dom0 filesystem instead, similar to VDI files in VirtualBox, but this is less efficient than using a logical volume.

sudo lvcreate -L80G -nwinsrv2008vm vg0- this creates an 80GB logical volume named “winsrv2008vm” in the logical volume group called “vg0”.

- Create a .cfg file for the Windows VM; I created

/home/mquinn/winsrv2008vm.cfgand used the following contents:

/home/mquinn/winsrv2008vm.cfg

builder = 'hvm'

memory = 3096

vcpus = 4

name = "PSAPPSRV"

vif = [‘mac=24:f2:04:a0:2a:37,bridge=xenbr0]

disk = [‘phy:/dev/vg0/winsrv2008vm,hda,w’,’file:/mnt/verbatim/WinServer2008R2.iso,hdc:cdrom,r’]

# Try to boot from CD-ROM first (‘d”), then try hard disk (“c”).

boot = “dc”

vnc = 1

vnclisten = “0.0.0.0”

vncpasswd = “psappsrv”

# Necessary for booting as of 12-06-2013

device_model_version = “qemu-xen”

# 07-05-2014 EDIT: To run under Xen 4.3, I replaced this line:

# device_model_override = “/usr/bin/qemu”

# with this:

device_model_override = "/usr/bin/qemu-system-x86_64"

bios = “seabios”

- Some notes about this configuration:

- Per the note above, Windows instances are virtualized using hardware assistance and require the “hvm” value to be used for the

builderproperty. - I’ve assigned 3GB of RAM to this VM, and I’m allowing it to use all 4 cores presented by the processor.

- I’ve assigned this VM a name of “PSAPPSRV” - you’ll use the name for some commands in the

xltoolstack, i.e., to shutdown this VM, I runsudo xl destroy PSAPPSRV. - The

vifproperty lists network interfaces available to the guest. Here I’ve made the xenbr0 bridge created earlier available to the guest. - The

diskproperty lists disks available to the guest. Here I’ve made the logical volume created earlier available as the primary hard drive (hda), and I’ve created virtual CD-ROM drive (hdc). - The

bootproperty defines the device priority used during the boot sequence; I want to run the Windows installer so I’ve put the CD-ROM before the hard drive. - I’ve enabled VNC, told the server to listen for any and all connections (0.0.0.0), and assigned a password. You won’t be able to run the Windows installer without establishing a VNC connection to the guest.

- The last three properties in the file are ones I found while searching through the Internet to resolve problems when booting this VM on Xen 4.3. I believe these lines are required due to the exclusion of QEMU from the most recent releases of Xen. These may or may not be necessary depending upon your setup and your version of Xen, so you may want to try booting without them first and see if you run into any issues before using them.

- Per the note above, Windows instances are virtualized using hardware assistance and require the “hvm” value to be used for the

- To start the virtual machine, run

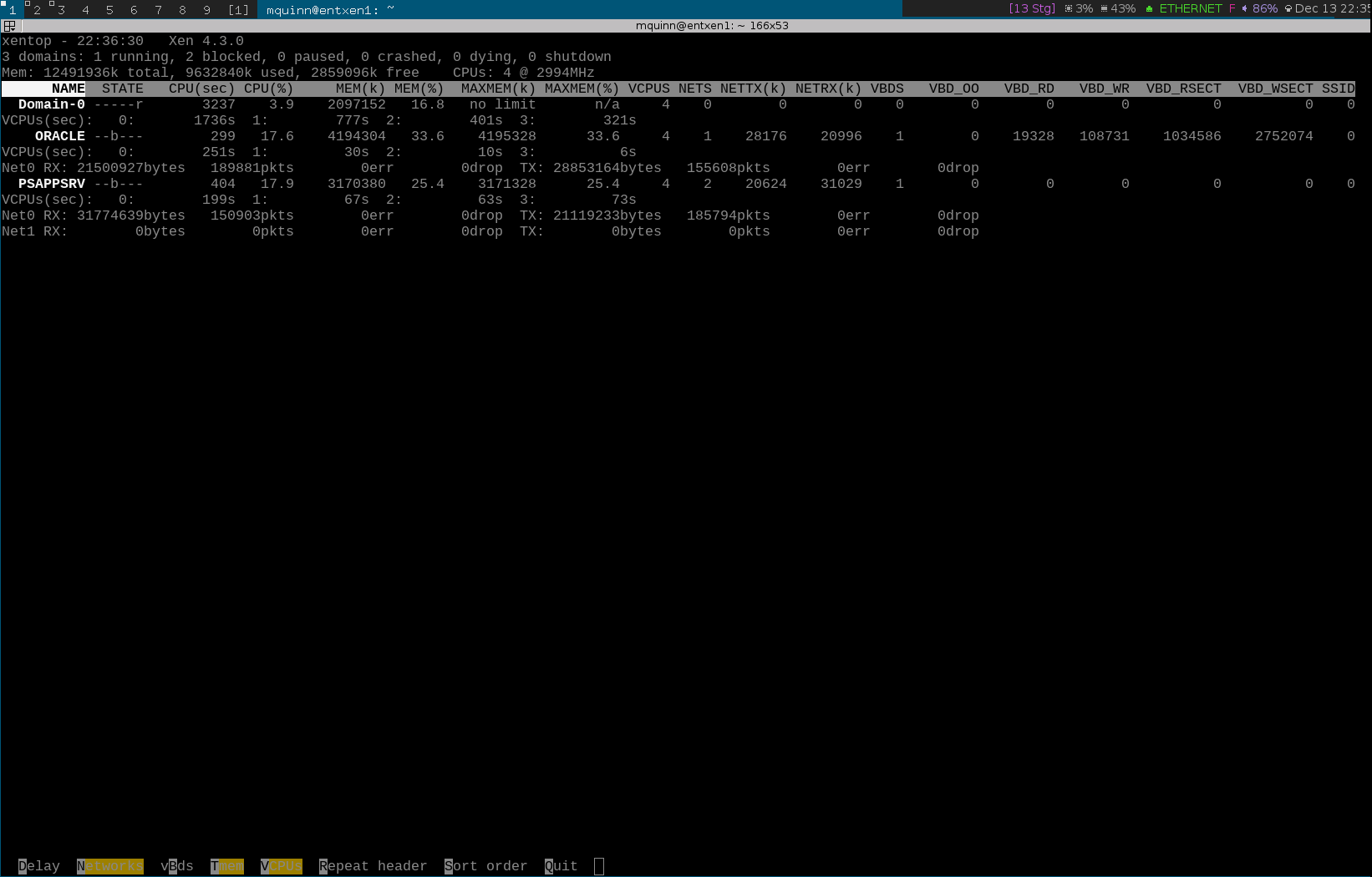

sudo xl create ~/winsrv2008vm.cfg. - You should see your VM running in the output of

sudo xl top, which provides some valuable metrics about the domUs running on your system:

- You should also be able to connect to your VM over VNC. I used

gvncvieweron a spare Debian machine and connected to the Windows VM usinggvncviewer 192.168.0.6; that IP was assigned to the VM by the local gateway through the xenbr0 network bridge. - You should now see the Windows installer in your VNC session. Step through the installer as you would for a native hardware installation.

- Once you’ve installed Windows, you can shutdown the VM and “remove” the ISO from the virtual CD-ROM drive attached to the VM. In

~/winsrv2008vm.cfg, change the second element in thediskarray to,hdc:cdrom,r(the leading comma is important). The VM will now be presented with an empty CD-ROM drive.- In the future, when the VM is running, you can insert an ISO into this drive using the following command:

sudo xl cd-insert PSAPPSRV hdc /mnt/verbatim/WinServer2008R2.iso, replacing the name of the VM and the ISO path with the appropriate values.

- In the future, when the VM is running, you can insert an ISO into this drive using the following command:

- Finally, at this point, you’ll likely want to enable Remote Desktop on the Windows machine rather than continue using a VNC client.

A Note About Windows PV Drivers

- Installing PV drivers on Windows domUs allows for increased performance. Unfortunately, every time I tried to install them, my Windows install got trashed and would show a BSOD on boot.

- First I tried to install the signed drivers from this page.

- I downloaded the MSI for Windows Server 2008 R2 64 bit.

- I ran the installer.

- I rebooted Windows.

- A BSOD appeared on screen (DRIVER_IRQL_NOT_LESS_OR_EQUAL).

- After restoring a working image of the Windows VM logical volume, I tried to install the unsigned drivers from here.

- I downloaded gplpv_Vista2008x64_0.11.0.372.msi.

- In a command window, I ran

bcdedit /set testsigning onto enable installation of test-signed drivers. - I ran the installer.

- I rebooted Windows.

- A BSOD appeared on screen (DRIVER_IRQL_NOT_LESS_OR_EQUAL).

- Getting the PV drivers working wasn’t critical for me, so I just continued without them. If you attempt this, make sure to make an image of your logical volume before doing so. If your Windows guest reboots without encountering a BSOD, go to Device Manager and ensure that “Xen Net Device Driver” and “Xen Block Device Driver” are present. Additionally, when dom0 shuts down in the future, Windows should shutdown automatically.

Creating a Paravirtualized Debian VM

- The procedure for creating a Linux-based domU in Xen is somewhat different from the one for Windows. When you first boot the domU to run the installer for the distribution of Linux you intend to run, you must point Xen to the location of a Xen-aware kernel and ramdisk. Once you’ve installed the distribution to disk, you can switch over to the newly installed (and Xen-aware) kernel and ramdisk on the domU disk itself.

- Mount a USB flash drive on a spare machine and download

initrd.gzandvmlinuzfor the Wheezy installer from this repository to the drive. - Log in to dom0.

- Unmount the USB drive from the spare machine and mount it on dom0 (I mounted it at

/mnt/kingston). - Create a logical volume for the VM’s hard drive:

sudo lvcreate -L30G -ndebianvm vg0 - Create a .cfg file for the new VM and insert the following:

/home/mquinn/debianvm.cfg

kernel = “/mnt/kingston/vmlinuz”

ramdisk = “/mnt/kingston/initrd.gz”

extra = “debian-installer/exit/always_halt=true -- console=hvc0”

vcpus = 4

memory = 2048

name = “EVM”

vif = [‘mac=a4:bc:24:ff:ca:9d,bridge=xenbr0’]

disk = [‘phy:/dev/vg0/debianvm,xvda,w’]

device_model_version = “qemu-xen”

device_model_override = “/usr/bin/qemu”

bios = “seabios"

- Notes about this configuration:

- Note the

kernelandramdiskproperties that were not needed in the Windows .cfg file. - The

extraproperty contains directives that I found on the Debian wiki.

- Note the

- Start the VM using

sudo xl create xen/debianvm.cfg. - Using VNC to connect to the VM is unnecessary (and may not be possible, I’m not sure on that one). The Debian installer is text-based, so just run

sudo xl console EVMon dom0, replacing “EVM” with the name you gave your VM. To exit the console, hitCtrl + ]. - Once you’ve installed the guest OS, shutdown the VM and make the following modifications to the .cfg file:

- Remove the

ramdisk,kernel, andextralines. - Add

bootloader = "pygrub"

- Remove the

- Now when you start the VM, it will boot from the kernel and ramdisk present on the guest disk.

Creating a Paravirtualized Oracle Linux VM

- The procedure for creating an Oracle Linux domU is very similar to that of creating a Debian domU. You still need to point Xen to a Xen-aware kernel and ramdisk when starting the instance for the first time, but the difference here is that Oracle Linux comes with a Xen-aware kernel and ramdisk in the installation media; there’s no need to download separate files.

- On my laptop, I connected an external CD-ROM drive containing an install disk for Oracle Linux Release 6 Update 4.

sudo mount /dev/sr0 /mnt/extcdrom

- I then copied the kernel (

/mnt/extcdrom/isolinux/vmlinuz) and ramdisk (/mnt/extcdrom/isolinux/initrd.img) from the installation disk to an external USB drive. - I then unmounted the CD drive but left it connected to the laptop.

- I made an image of the disk and placed it on a separate USB drive:

sudo dd if=/dev/sr0 of=/mnt/verbatim/OL6Update4.iso bs=1M

- Logged in to dom0.

- I unmounted and disconnected both USB drives and the CD drive from the laptop, then mounted the two USB drives on dom0.

- Created a 70GB logical volume named

oraclevmin thevg0logical volume group that I created during installation:sudo lvcreate -L70G -noraclevm vg0

- Created the .cfg file for the Oracle Linux VM, with contents as follows:

/home/mquinn/oraclevm.cfg

kernel = “/mnt/kingston/vmlinuz”

ramdisk = “/mnt/kingston/initrd.img”

vcpus = 4

memory = 4096

name = “ORACLE”

vif = [‘mac=a4:cd:11:eb:50:7a,bridge=virbr0’]

disk = [‘phy:/dev/vg0/oraclevm,xvda,w’,’file:/mnt/verbatim/OEL6Update4.iso,hdc:cdrom,r’]

device_model_version = “qemu-xen”

device_model_override = “/usr/bin/qemu”

bios = “seabios”

- Start the VM using

sudo xl create xen/oraclevm.cfg. - Run

sudo xl console ORACLEon dom0 to connect to the Oracle Linux console. - Once you’ve installed the guest OS, shutdown the VM and make the following modifications to the .cfg file:

- Remove the

ramdiskandkernellines. - Add

bootloader = "pygrub"

- Remove the

- Now when you start the VM, it will boot from the kernel and ramdisk present on the guest disk.

- Note: As of this writing, eth0 does not come up by default on Oracle Linux 6. To bring the eth0 interface up at boot, edit

/etc/sysconfig/network-scripts/ifcfg-eth0and change theONBOOTparameter toyes. When you reboot Oracle Linux, eth0 will start automatically.

- Note: As of this writing, eth0 does not come up by default on Oracle Linux 6. To bring the eth0 interface up at boot, edit

Miscellaneous Notes

- You may want to bring one or more domUs up when dom0 starts.

sudo nano /etc/default/xendomains- Note the path specified for

XENDOMAINS_AUTOor change it to a path of your own.

- Note the path specified for

- Create symlinks to all of the .cfg files in the directory provided to

XENDOMAINS_AUTOfor each of the domUs you want to start automatically when dom0 starts. - Reboot dom0.

- Verify that the domUs are started automatically by reviewing the output of

sudo xl top.

- If you are running Xen on an SSD, you may want to enable TRIM. Some resources suggest forgoing this in favor of a cron job that trims the disk once a week or so, but the only downside to trimming in realtime involves degraded performance during large delete operations, which will not occur often on my setup.

sudo nano /etc/fstab- For the

/partition, adddiscardandnoatimeto the options string (noatimedisables writing access timestamps on file reads, not TRIM related but improves performance). - For the swap partition, add

disarcdto the options string.

- For the

sudo nano /etc/lvm/lvm.conf- Change

issue_discardsfrom 0 to 1.

- Change

sudo update-initramfs -u -k allsudo reboot- Run

sudo fstrim -v /to trim unused blocks on-demand; this will be done automatically on future disk writes as a result of the modifications made in this section.

- Run

Useful References

I found the following to be good resources while setting things up and troubleshooting:

- https://wiki.debian.org/Xen

- http://debian-handbook.info/browse/wheezy/sect.virtualization.html

- http://wiki.xenproject.org/wiki/Xen_Beginners_Guide

- http://blog.tickmeet.com/2013/11/setting-up-a-windows-server-2012-r2-virtual-machine-with-xen-on-ubuntu/

- http://rootprompt.apatsch.net/2012/04/11/windows-7-domu-on-xen-4-hvm-with-debian-squeeze-dom0/

- http://rabexc.org/posts/how-to-get-started-with-libvirt-on

- http://wiki.xen.org/wiki/DebianGuestInstallationUsingDebian_Installer

- http://www.rsreese.com/creating-debian-guests-on-xen-using-parition-based-filesystem/

- http://martincarstenbach.wordpress.com/2011/04/01/installing-oracle-linux-6-as-a-domu-in-xen/

- http://www.ozmoroz.com/2012/10/troubleshooting-eth0-in-oracle-linux.html